Search the Community

Showing results for tags 'exp gdc beast'.

-

So here you have my egpu project. Notebook HP Pavilion DV7 6103EG Bios: HP Insyde F.1B moded by donovan6000 CPU: Intel Core i7-2630QM IGP: HD3000 DGP: ATI 6770M RAM: 8GB Storage: ADATA SSD S599 64GB OS: 64-bit Windows 10 eGPU gear EXP GDC Beast DEll DA-2 220W Palit GTX680 4GB - PCI-E 2 x16 @ x1 2.0 DIY Setup 1.3 Results 3dmark06=18319 3dmark11.GPU=8222 Heaven 2454 Firestrike 5357 Sorry, in the title I'm not sure about mpcie 1 or 2 and bandwidth. Unfortunately it isn't worked plug and play, however worked flawlessly without change anything or using Setup 1.3 about 3 times in gen2 mode. Finally I went for Setup 1.3 and made recommended PCI Compation and chainload still worked just random few times even to set gen1 and disabled dGPU. Then tried to use command to reset and init iport in Setup 1.3 and looks like it solved my problem. I'm not sure I need every command. It is just my common sense. Still it is working like a charm at the moment without any problem. My final and working Startup.bat call iport dGPU off call iport gen2 2 call vidwait 60 call vidinit -d %eGPU% call iport reset 2 call iport init 2 call pci :end call chainload mbr Just wondering are my results nice, and I can leave it like this, or go ahead and do some more modifications. Like tweak more in 1.3, change the PSU. Or if this is the max I can get with the 680 does it worth it to get a stronger VGA, or no point because of the limits of my chipset and CPU. My Windows Power Plan is set to High Performance. Thanks for reading.

- 18 replies

-

- 2

-

-

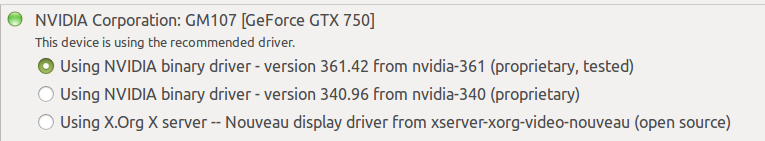

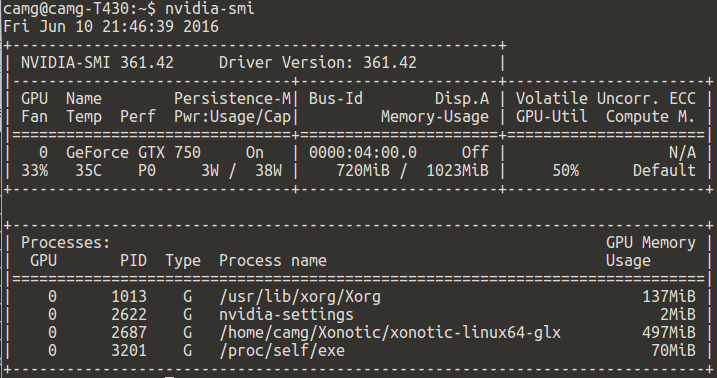

NOTE: This post has been updated to reflect the latest state of this implementation... Hello mates, I am delighted to share a bit of my new successful implementation... After fighting my way thru previous EGPU implementations using several Linux distributions. From Ubuntu Mate 14 & 15 to Linux Mate and Centos 5 and 6. I only documented one of them. I had to share this experience, mostly because I am amazed by what the community behind Ubuntu Mate 16.04 has achieved. So bear with me. System Specs Lenovo T430 Intel Core i5-3320m at 2.6 Ghz 8 GB DDR3L 12800 16 GB DDR3L 12800 Intel HD 4000 EGPU: Zotac GeForce GTX 750 1GB EVGA GeForce GTX 950 SC+ 2GB KFA2 GeForce GTX 970 OC Silent "Infin8 Black Edition" 4GB EXP GDC v8.3 Beast Express Card Seasonic 350 watts 80+ bronze Display: Internal LCD 1600x900 Dell UltraSharp 2007FP - 20.1" LCD Monitor Procedure: I prepared the hardware as usual. Feeding power to the Beast adapter using the PSU. Plugging these into the laptop's ExpressCard slot. The installation of Ubuntu Mate 16.04 used is only a couple of weeks old and is loaded only with a full stack of Python and web tools I need. For the integrated graphic card, stock open source drivers are used. For the EGPU... I was ready to perform the usual steps, disable nouveau drivers, reboot switch to run level 3, install the cuda drivers, etc. But.. Following the advice read on a Ubuntu/Nvidia forum, and very sceptical, I installed the most recent proprietary drivers for my card. Reboot. Boom! I am done. Even functionality previously not available in Linux is now available... As you can see from the last screenshot the drivers now report what processes are being executed on the GPU, that was something reserved previously to high-end GPUs like Teslas. That screenshot also shows the evidence of the computation being performed in the GPU while the display is rendered in my laptop's LCD. This screenshot also shows how the proprietary driver can now display the GPU temperature as well as other useful data. For those of you into CUDA computing, I can report CUDA toolkit 7.5 is now available in the Ubuntu repository and also installs and performs without any issue. I went from zero to training TensorFlow models using the GPU in 30 minutes or so. Amazing! I could expand this post if anyone needs more info, but it was very easy. Cheers! After upgrading the GPU two times, my system is now capable of handling Doom fairly easy. Now some benchmark results. RAM eGPU PCIe gen 3d Mark 11 3dm11 Graphics 8 GB GTX 750 2 P3 996 4 095 16 GB GTX 750 2 P3 994 4 094 16 GB GTX 950 1 P5 214 7 076 16 GB GTX 950 2 P5 249 7 709 16 GB GTX 970 1 P7 575 11 202 16 GB GTA 970 2 P8 176 12 946 Now, the difference between Gen 1 and Gen 2 might not seem relevant from the results in the table. But playing Doom there is a difference of around 15 fps on average between both modes. This brief difference is even more noticeable during intense fights.

- 10 replies

-

Hey Guys! I bought the EXP GDC v8.0 to use with my laptops: Alienware M17x R2 (no iGPU) i7 920xm GTX 680M 16GB DDR3 RAM ExpressCard x1 Windows 7 x64 Ultimate Alienware M14x R2 (iGPU) i7 3840qm GT 650M 16GB DDR3 RAM mPCIe x2(?) Windows 7 x64 Ultimate I am using: ZOTAC GTX 970 300w PSU DIY eGPU Setup 1.30 My problem is that Windows does not detect anything on either setup. No drivers are ever installed for a "Standard VGA Adapter", and I always check Device Manager. I have tried everything on the TechInferno webpage here, but nothing happens once in Windows. I have entered into the DIY eGPU Setup (menu option) several times trying what it recommends to get the graphics card to be seen, and messed around a bit with the settings, but none of that works either. Sometimes certain options will cause the laptop to hang and force me to reboot. The ExpressCard slot and mPCIe slots of my laptops do work, I regularly use the ExpressCard with a USB 3.0 card, and I normally have a WLAN and a M.SATA SSD drive in the mCPIe slots (both I have extensively tried with the EXP GDC adapter). The graphics card only boots up (with fans on full blast) once the PSU is plugged in after the BIOS has loaded. If I have it connected from a cold boot, the card will attempt to turn on with slow fans and then turn off on either laptop. The graphics card is lightweight and fully inserted into the EXP GDC adapter. There is no switch on the PSU, so once everything is plugged in (and the laptop is on), it just goes. I thought it would at least work on the M17x R2 since there is an Amazon review comment stating that it does. The only other thing I can mention is that the GTX 680M was never officially meant for the M17x R2, so I have to hack the NVIDIA display drivers to get NVIDIA driver support. I don't think this has anything to do with the eGPU detection though (especially since the DIY eGPU Setup dose see the GTX 680M)... And, if it helps, I do have a Dell 220w power brick I can use instead. If anyone has any suggestions I can try, I will, as I really want this old laptop to run at its best before I move on. Thanks in advance!