-

Posts

113 -

Joined

-

Last visited

-

Days Won

1

Content Type

Profiles

Forums

Downloads

Posts posted by Dr. AMK

-

-

10 hours ago, Tech Inferno Fan said:

Use the expresscard slot with a BPlus PE4C 3.0 for the most fuss free x1 2.0 (4Gbps) eGPU solution.

4 hours ago, Dr. AMK said:Thank you @Tech Inferno Fan for you kind support. I'll give it a try.

Did you try the eGPU with GTX 1080 before?, or any other members did that before, even with different laptop? is the card will work with full performance? can I OC the card?

Best Regards,

I found this article about

ExpressCard 34 and ExpressCard 54

http://www.synchrotech.com/support/faq-expresscard.html

So is the bandwidth will be OK for eGPY with GTX 1080? is there is any kind of Bottleneck?

I have to do more research.

-

ExpressCard FAQ for ExpressCard 34 and ExpressCard 54

Contents

- What is ExpressCard?

- Differences between ExpressCard and PC Card

- Is ExpressCard backward compatible with PCMCIA PC Cards?

- Is PCMCIA PC Card forward compatible with ExpressCard?

- ExpressCard Dimensions

- ExpressCard Slotting

- Is there a way to stabilize ExpressCard/34 inside ExpressCard/54 slots?

- How does ExpressCard 2.0 differ from ExpressCard?

- ExpressCard versus PC Card Overview

- Is it possible to add ExpressCard slots to computers with PCIe?

- Are any hot-swap capable external solutions for SxS ExpressCards?

- Are any Thunderbolt solutions for ExpressCards?

What is ExpressCard?

ExpressCard is PCMCIA's (subsumed by the USB-IF) portable, removable, expansion technology to replace PC Card and PC CardBus (sometimes mistakenly called PCMCIA Cards). ExpressCard holds several advantages over PC Card some of which are enumerated below.

Differences between ExpressCard and PC Card

- ExpressCard features new interconnect technology

- ExpressCard utilizes two interconnect technologies, the most important of which is PCI Express (PCIe). ExpressCards featuring PCIe 1X technology are capable of 2.5Gb/s per direction, giving an ExpressCard operating in full duplex mode an approximate throughput of 250MB/s x 2, or 500MB/s of throughput, nearly quadrupling the effective speed of 32-bit PC CardBus. ExpressCard alternatively utilizes USB 2.0 for lower speed and less complex applications, with a maximum throughput of 480mb/s.

- ExpressCard allows for much more bandwidth

- ExpressCards featuring PCIe 1X technology are capable of 2.5Gb/s per direction, allowing for realization of applications, especially host adapters, that underperformed or were impossible under PC CardBus. Examples of full throughput host adapters that bottlenecked CardBus, but don't tax ExpressCard at all are FireWire 800 (IEEE 1394.b) and Gigabit Ethernet (GbE). Additionally, ExpressCard will be able to handle the latest SATAe and other high end buses with relative ease.

- ExpressCard has superior power saving and management

- ExpressCard operates at lower voltages than PC Card with 1.5 and 3.3V baselines. This allows systems deploying ExpressCard technology to take full advantage of current low power utilization throughout.

- ExpressCard is a serial rather than a parallel bus

- ExpressCard follows the trend of PCI Express and SATA in transitioning from parallel buses to serial buses. Rather than a 68-pin parallel connection used in PC Card, ExpressCard utilizes a 26-pin beam on blade serial connection. ExpressCard's use of high performance PCIe and USB 2.0 serial connections already built on to host system reduces complexity and eliminates the problems with signal timing associated with parallel buses.

- ExpressCard is simpler and cheaper to implement than PC Card

- Because ExpressCard harnesses buses that already exist on a host system, it doesn't require a separate ASIC to integrate it to a host system. Unlike PC CardBus and PC Card in which a controller chip was necessary to bridge between the card slot and the underlying system bus, ExpressCard devices literarily plug into either the PCIe or USB 2.0 bus on the system, depending on the ExpressCard type employed. ExpressCard saves in both cost and complexity in this regard.

Is ExpressCard backward compatible with PCMCIA PC Cards?

Our ExpressCard 34 to PCMCIA PC CardBus 16/32-bit Read-Writer Express2PCC allows computers with ExpressCard slots to use either 16-bit legacy PC Cards or 32-bit PC cardbus Cards. Express2PCC works with host systems featuring native ExpressCard 34 or ExpressCard 54 slots, or an installed PCIe to ExpressCard adapter. If the host operating system supports the PCMCIA PC Card, it should also work in conjunction with the Express2PCC. Sonnet's Qio device provides support for Panasonic P2 PC Card Memory devices on both Mac OS X and Windows. The Qio is available with either ExpressCard, PCIe, or Thunderbolt connections to the host system.

Is PCMCIA PC Card forward compatible with ExpressCard?

For PCIe based ExpressCards — in a word, no. However, it is useful to explain why this is the case. 32-bit PC Card CardBus card don't provide enough bandwidth to emulate ExpressCard. Furthermore, ExpressCard cards are completely different from PC Cards in voltages, form factor, physical connectivity and bus technology.

USB Mode ExpressCard Only Devices

While the PCMCIA ExpressCard specification requires all host adapters and slots to support both PCIe and USB 2.0 portions of the ExpressCard bus, several products are now on the market that only support the USB 2.0 mode. While this technically breaks the specification, many consumers have been clamoring for such a device. In response to such demand, products are now appearing on the market that bridge between PCMCIA PC Card and USB 2.0 based ExpressCards. Several new devices behave like USB 2.0 hubs, routing an ExpressCard's USB 2.0 through a PCMCIA PC Card slot. PCMCIA PC Card to USB 2.0-Mode ExpressCard adapters are available as 32-bit and 16-bit PCMCIA PC Card varieties. PC Card USB 2.0 mode ExpressCard host adapters cannot work with any PCIe based ExpressCards. This is true for USB to USB 2.0-Mode ExpressCard adapters like our MicroU2E series as well.

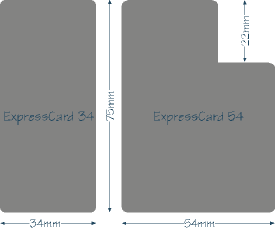

ExpressCard Dimensions

ExpressCards come in two form factors: ExpressCard 34 and ExpressCard 54. The form factors share the same dimensions except for width, from which the names of the form factors are derived. ExpressCard share a length of 75mm and a depth (or thickness) of 5.0mm — the same depth as Type II PC Cards. Both the widths and shapes of the two ExpressCard form factors are different, but the portion of the card which connects to the card slot are an identical 34mm. ExpressCard 34 cards are 34mm wide and rectangular in shape. ExpressCard 54 Cards are 54mm wide at their widest point and 34mm wide at the connection point, creating a shape often referred to as a "Fat-L". Either form factor is allowed additional volume extending outside of what would be considered the flush portion of an inserted card. This is referred to as the extended portion of the card and ExpressCards with such a configuration are referred to as extended cards. The extended portion can exceed the card dimension in any axis, but there are obvious practical limitations to how much. For an excellent example of an ExpressCard with an extended portion on two axis (depth and width) see: ExpressCard 34 to CompactFlash Memory Card Adapter. For an explanation of how ExpressCard modules are used in the two types of ExpressCard slots, please see ExpressCard Slotting.

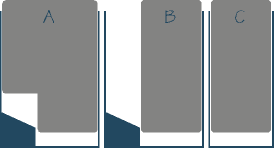

ExpressCard Slotting

ExpressCard slots come in two varieties; those designed for both ExpressCard 54 and 34 cards, and those for ExpressCard 34 cards only. ExpressCard slots are required to provide both PCIe and USB 2.0 functionality, regardless of their size. ExpressCard 54 slots, as pictured in figures A and B on the right, are able to accept both 54 and 34 cards. What PCMCIA describes as a "novel guidance device," which seen in the lower left corner of figures A and B, physically guides an ExpressCard 34 device to the connector part of the slot. Since the connection part of the card for both types of ExpressCards is identically 34mm, this scheme provides an elegant solution for utilizing both types of cards. Conversely, only ExpressCard 34 cards fit in ExpressCard 34 slots as pictured in figure C. Paying attention to this last fact is important when shopping for ExpressCard products, if a device only has an ExpressCard 34 slot, then only shop for ExpressCard 34 devices.

PCMCIA literature has expressed that systems deploying multiple ExpressCard slots should lay them out adjacently on a horizontal plane rather than the "stacked slot" convention employed by PC Card slots.

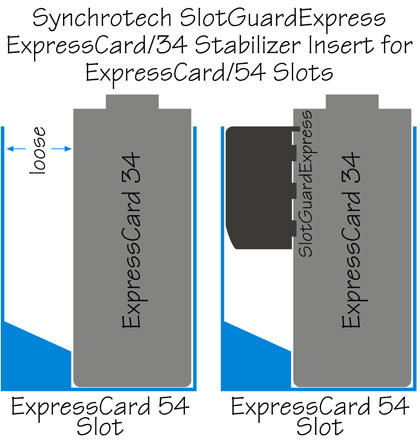

Is there a way to stabilize ExpressCard/34 inside ExpressCard/54 slots?

While it may have seemed a sound idea to engineers designing the original ExpressCard standard, one of the more perplexing — and troublesome — aspects of the design has been using ExpressCard/34 cards in ExpressCard/54 slots. In practice, ExpressCard/34 cards, even when properly seated, aren't stable when inserted in the larger ExpressCard slots. The gap between the card and the larger slots allowed cards to be easily dislodged or come loose. This problem is more evident when ExpressCards with large "extended card" portions, and/or with cables attached to them. When cards come loose and disconnect during operation, they drop signal, or worse, can damage the ExpressCard and even the ExpressCard slot. This is especially true for cards providing electrical current, like many Serial I/O ExpressCards.

The SlotGuardExpress ExpressCard/34 Stabilizer Insert for ExpressCard/54 Slots protects against ExpressCards being dislodged from their slots. SlotGuardExpress is an inexpensive, non-conductive insert that attaches to the side of an ExpressCard/34 to eliminate the free-play between the card and the ExpressCard slot. SlotGuardExpress is available as a product for individual end users and for Original Equipment Manufacturers (OEM who want to include it with their ExpressCard/34 products. SlotGuardExpress puts an end to disrupted connections and equipment damage caused by ExpressCards moving side to side (laterally) in larger slots.

Source:

http://www.synchrotech.com/support/faq-expresscard.html

-

7 hours ago, Tech Inferno Fan said:

Use the expresscard slot with a BPlus PE4C 3.0 for the most fuss free x1 2.0 (4Gbps) eGPU solution.

Thank you @Tech Inferno Fan for you kind support. I'll give it a try.

Did you try the eGPU with GTX 1080 before?, or any other members did that before, even with different laptop? is the card will work with full performance? can I OC the card?

Best Regards,

-

Dear Respected Members,

Hope that you are doing well,

I want your advice to use an eGPU solution for Clevo P570WM with the new GTX 1080, I can use the PCI ExpressCard in the front, or I can use the WiFi replacement one. Is there is any bandwidth restrictions or any other issues?

If someone has any experience with this, please share it.

Laptop specs: Clevo P570WM, I7 4930k, 880M Sli, 16GB RAM

Regards,

-

1

1

-

-

Please consider this solution as well,

http://www.coollaboratory.com/product/coollaboratory-liquid-ultra/

Your video @Mr. Fox

-

1

1

-

-

Asus ROG GX800 watercooled Gaming-Laptop

-

On 6/27/2016 at 11:41 PM, Dr. AMK said:

Dear @Prema if you please give me your respected Bios and vBios for my gtx 770m in Alienware 17 r5. Thanks in advance. My vBios attached, and my Bios is the original Alienware 17 ver 14.

Dear @Prema just following up with you are those Bios and vBios available?

-

Intel starts sending Kaby Lake Processors to Laptop and Desktop Manufacturers

Kaby Lake is the first Intel CPU to break the ‘tick-tock’ cycle, retired this year. Looking ahead, each manufacturing process of Intel will have three cycles to get the maximum performance of each process node in the future. The Intel Kaby Lake will be the third and final 14nm chip, then in 2017, Intel will make the jump to 10nm withCanonlake, then we will remain in lithography of 10nm until 2020 and you can expect a new range of processors every year.

Is expected that new CPUs will have a TDP up to 95W and provide native support for several current technologies such as USB 3.1, HDCP 2.2 and Thunderbolt 3. This is an ‘optimization’ CPU, so it will be based on the technology introduced in Broadwell and Skylake, so hopefully new processors won’t a compared to Skylake CPUs launched huge performance leap last year. New CPUs will also be presented in the new Surface tablets in early 2017.

First details of Intel’s 7th Generation Kaby Lake Core i7-7700K

Regarding the specifications of Core i7-7700K, this flagship processor of the company (for that platform) is equipped with four cores with HyperThreading technology, it has a total of 8 logical cores at a base frequency of 3.60 GHz reaching the 4.20 GHz with TurboBoost frequency, far from the 4.00 GHz Base and 4.20 GHz Turbo-Boost of the current Core i7-6700K, although it could be changed as for now we are talking about an engineering sample. The information is supplemented with HD graphics with 24 Execution Unit , 256 KB cache Level 2 (L2) per core, 8MB distributed to the L3 cache, and its launch would take place at the end of the current year, so there is still enough time ahead.

-

Specs:

- 11 TFLOPS FP32

- 44 TOPS INT8 (new deep learning inferencing instruction)

- 12B transistors

- 3,584 CUDA cores at 1.53GHz (versus 3,072 cores at 1.08GHz in previous TITAN X)

- Up to 60% faster performance than previous TITAN X

- High performance engineering for maximum overclocking

- 12 GB of GDDR5X memory (480 GB/s)

https://blogs.nvidia.com/blog/2016/07/21/titan-x/

-

Guessing who will be the first company to include the nVidia Pascal 1080 and AMD Polaris GPU's inside their Laptops:

1-

2-

3-

4-

-

Congratulations for your unusual achievement, something we do not see a lot, proving the possibility of the GPU core processor transfer successfully between the desktop and mobile versions, this achievement may motivate others to invent new and useful things as you did.

Waiting for the OC, testing and benchmark results after @Prema Bios. Good Luck

-

1

1

-

-

On 3/26/2016 at 11:25 PM, Prema said:

Sorry I can't use re-seller logos to make Mods for end-user...

I have refunded your donation so you can instead use it to promote your account on TI and also get svl7's vBIOS for your 780M.

Dear @Prema I did your advice about supporting this fantastic community by upgrading my account to T|I Elite Member - Life , thank you for your kind support and efforts.

-

2

2

-

-

Dear @Prema if you please give me your respected Bios and vBios for my gtx 770m in Alienware 17 r5. Thanks in advance. My vBios attached, and my Bios is the original Alienware 17 ver 14.

-

8 hours ago, Mr. Fox said:

Yeah, but 'sharing heat' isn't a good thing to do. Extreme overclocked CPUs get so much hotter than GPUs and doing anything that might increase CPU temps is a bad idea. But, it is good that it is not permanently joined by solder. Being bolted or screwed together is great. That potentially opens the door to some custom heat sink modding that could be more effective than the stock setup. As I suggested before, if works well it really doesn't matter what it looks like. It's covered up and nobody sees it, so results is the only thing that matters. It just looks messy, that's all.

You're absolutely right @Mr. Fox, I have a bad experiance with the MSI GT80 Titan i7 6700HQ 980m SLI, the temp was jumping over 90's for no reason for both CPU and GPU even after I used a CoolLaboratory Liquid Ultra Thermal Compound, I sold it after 1 month, never like it.

-

NVIDIA GeForce GTX 1080 GPU For Notebook’s Specifications Leaked – Get Ready For High-Performance Computing In Mobile Machines

http://wccftech.com/geforce-gtx-1080-gpu-for-notebooks-specifications-leaked/

-

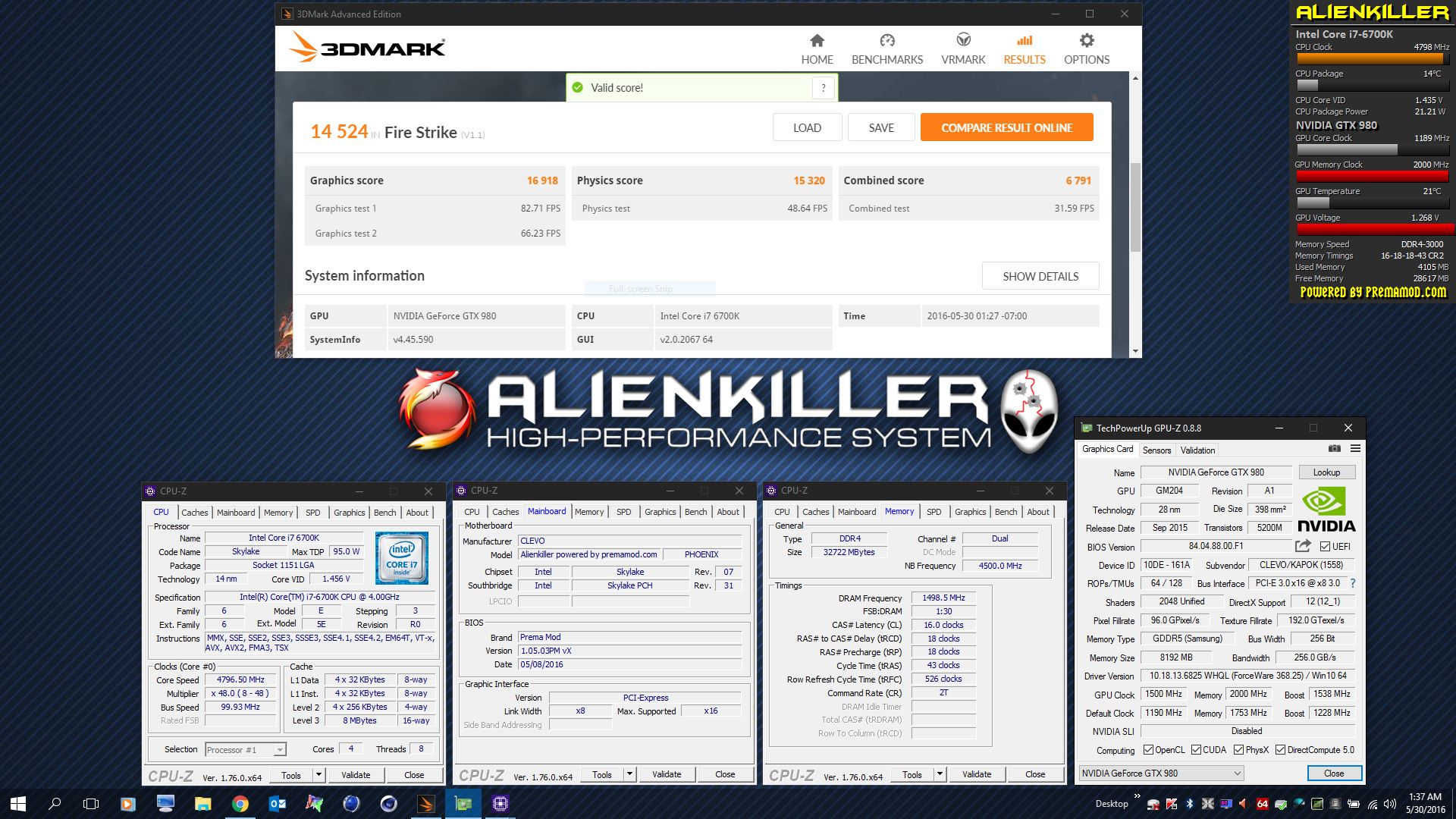

37 minutes ago, Mr. Fox said:

@Mr. Fox This is really impressive, it's almost reach the GTX 980 SLI score with one single card, this is your magic of course.

-

3

3

-

-

-

-

It’s about time we share first benchmarks of GeForce GTX 1080, new flagship graphics card that will be unveiled tomorrow by NVIDIA.

NVIDIA GeForce GTX 1080, 8GB RAM GDDR5X at 10 GHz

I think the most interesting detail about GTX 1080 is the new memory. NVIDIA has finally used 8GB ram for its flagship card. It’s no longer an exclusive to mobile solutions. Additionally, the GDDR5X modules are clocked at 2500 MHz (which is shown as 5000+ MHz in 3DMark). However the effective clock is 10000 MHz, which means the bandwidth is somewhere around 320 GB/s (assuming it’s 256-bit wide).

On the GPU side we have a huge improvement in terms of frequencies. It is said that GTX 1080 can boost up to 1.8 GHz, with base clock around 1.6 GHz. At the time of writing we are not able to confirm the exact reference clock. For such reason I decided to avoid making comparison charts, so this post will essentially tell you what GTX 1080 is capable of and nothing more.

NVIDIA Pascal Series (To be confirmed) May 5th 2016 NVIDIA GeForce GTX 1080 NVIDIA GeForce GTX 1070 NVIDIA Tesla P100 GPU 16nm FF GP104-400 16nm FF GP104-200 16nm FF GP100-890 CUDA Cores ? ? 3584 Memory Type 8GB GDDR5X 8GB GDDR5 16 GB HBM2 Base Clock ? ? 1328 MHz Memory Clock 2500 MHz 2000 MHz 352 MHz Effective Memory Clock 10000 MHz 8000 MHz 1408 MHz Memory Bus 256-bit 256-bit 4096-bit Memory Bandwidth 320 GB/s 256 GB/s 720 GB/s NVIDIA GeForce GTX 1080 8GB — 3DMark11 Performance

Like always, we are only looking at graphics score. 3DMark11 Performance preset is rendered at 1280×720 resolution. GeForce GTX 1080 scores 27683 points, which is still above overclocked GM200 cards (~23-25k). Worth noting 3DMark11 is not showing correct GPU clocks, however the new driver already supports GTX 1080 by its name.

http://videocardz.com/59558/nvidia-geforce-gtx-1080-3dmark-benchmarks

-

6 hours ago, odin2free said:

Wait

Are you using pads on the die or on the chips?

Dies = paste

Chips = pads

The reason people change pads is because of age.

Older= Pads change

Newer = Dont need to UNLESS you notice something is wrong with the pads such as CRACKS fractures tears etc....

@Dr. AMK Did you actuallly put paste in that little regulator are (think those are regulators to lazy to look up layouts)....id just go pads on those and make sure they touch some copper or aluminium to passively cool or actively cool dependant on what the entire heatsink cooling set up looks like)

Dear @odin2free thanks for your advice, for sure I'm using past for the dies, but I didn't change sny pads, it looks OK but compressed, the Temp's for all is good and I didn't face any problems. I'm just want to make sure that everything is perfect.

-

I have the P570WM with duel 330W power supply, I like to give it a try, how can I get the cards? and can I use the same old heat sinks and the SLI cable for the GTX 780m's

-

On 4/2/2016 at 1:06 AM, odin2free said:

Frozencpu is where i get mine.

and no you do not have to but depends on how often you change your paste...

go with .05 or 1.0mm thats what i go with...dont need to go with anything to crazy special.

Thanks @odin2free for your kind advice..

-

We are waiting for all brands to innocence supporting desktop SLI's, no one except MSI until now. There are many concerns about it, like power consumption and performance limitations.

-

3 hours ago, TheCodeBreaker said:

I will try and explain this the best way I can. First of all if you are hitting temps like that just playing games like Far Cry 4, You need to re-think and re-evaluate your overclocks. Your system should not hit 93C as with that temp it is probably thermal throttling to protect itself from being fried. So go easy with the overclocking, no need to push it so hard for no good reason.

As for your other inquiry about the additional voltage, that is a "no go bro", for 2 reasons, firstly most cards are meant to be running at certain voltages, anything passed the amount given unlocked is what you can push it to, anything past that the card will not boot. Atleast that is as far as my experience goes with AMD cards, im pretty sure Nvidia is the same. Second reason, Your already producing an excess heat amount, more voltage = more heat = more thermal throttle ~= less performance.

My recommendation for overclocking, go from stock clocks increase by 50 mhz at a time, run a benchmark, temps should be the same on average. if it fails, increase voltage slightly. check temps again, make sure they are within the threshold.

The voltage and power range visible in the picture is probably from earlier times when that program was probably not reading it correctly or something. Either way, you dont need more voltage, you have enough heat. do what i mentioned above.

Also, Mar7aba xD

Tbh, I dont know what the issue could be, I know people have reported awesome benchmarks, I am yet to see it. Maybe its because I updated to the latest dell bios, maybe thats locking it down? I dont know tbh, Im going to mess with it later, unless you have some sort of suggestion.

1st of all thank you ( TheCodeBreaker) and (Mar7aba xD) for your kind reply and explanation, I found that the temp hit the 90's only on 4K settings of that game, but with 1080p it's almost hit <80, so I think the 4K settings with my 780m SLI overclocked is the only problem, otherwise the svl7 vBios is perfect and it gives me more power for all my games.

Regards,

I just want to confirm before purchasing questions

in DIY e-GPU Projects

Posted · Edited by Dr. AMK

This is the GTX 1080 full Specs:

http://www.geforce.com/hardware/10series/geforce-gtx-1080

They mentioned that:

- it's PCIe 3.0Bus Support

- 180W Graphics Card Power (W)

- 500W Recommended System Power (W),

so is this will need special power supply with the BPlus PE4C 3.0

The PE4C V3.0 is designed for Notebook PCs that converts PCI Express 16X Add-on Card to ExCard or mPCIe or PCIe x1 connecter with up to Gen3 (8Gbps) speed.

This adapter allows you to use your existing PCI-E 16X Card in the notebook PC for gaming.

Source:

http://www.hwtools.net/Adapter/PE4C V3.0.html