Leaderboard

Popular Content

Showing content with the highest reputation on 09/27/12 in all areas

-

So, since the Acer section is very empty and sad, and because success stories are worth sharing, and because because a lot of 5750G complain about overheat issues, i decided to share my little intervention on my 5750G. The problem, as we all know, is that this laptop fan has a speed at the level of snail. As far as I can tell, it's never gone above 40% under stock settings. Everyone that bothered actually flashing their BIOS on this machine knows how fast that fan can spin, but it never does. The problems this caused, were as such: Turbo-boost on the CPU was always unusable. Under load, the CPU would quickly go 90+, throttle, the machine would slow down... it sucked. Second issue: The GPU would go 95+ on stock speeds under load, the GPU would crash during gaming, not fun. This resulted in having to use lower clock speeds on the CPU and/or GPU, and generally game at lower settings all the time. It was a waste for such a machine. In a twisted turn of good fortune, my desktop mobo shorted out a MOSFET during overclocking (retarded AUTO-PLL on ASUS mobo...). Looking for someone to repair the mobo, I found a guy handy with a solder hammer and heat gun, who told me about his ongoing little mods and tweaks, among which was a self-made fan controller (he'd made about 10 pieces) for over-riding fan control settings on retarded implementations. I jumped for joy. We got to it, and the results are in the attached pics. ---- Actual results: Under Furmark, with the GT-540M GPU overclocked to 850 / 1050 , furmark achieved a max temp of 93 degrees celsius, after which it settled down to 91 degrees. Under full load in prime95, the i7-2630qm CPU maxed out with turbo-boost at a temperature of around 77 degrees (down from 95+ !!!!) With both prime95 and furmark running at those parameters, the maximum gpu temp was a stable 93 degrees, with the CPU going to about 84 degrees celsius. Unfortunately, this GPU overclock was not stable enough for Starcraft 2, which, even though it games wonderfully (I use full extreme settings at 1366x768 resolution with Anti-Aliasing enabled - it stays within 35-50 fps), it absolutely NUKES the GPU, having it rise steadily to 93-94-95 and then it crashes the GPU. Leaving the GPU at stock speeds will keep it stable at about 91 degrees, but you can no longer max out the quality without taking a performance hit . It is however, unimaginable compared to stock settings. ---- About the fan and controller: The fan appears to be PWM-type, receiving an actual max voltage of 3.3V (even though it's rated for 5V !!!). I have no idea why Acer pulled such a complete dick move, except for one: it IS loud. It's a very noisy fan even at 60-70% speed. When hitting 100% speed, it's a goddamn turbine. The airflow, is NOT impressive. While at max speed, if you put your hand near the vent, you'll feel a gentle hot flow. I expected a moderate wave, at least. It would be a very good idea to install a higher-performance fan. Regarding the controller: The controller was made by my new friend. He has multiple pieces, and can build more. I don't currently know exactly what the design is, but if you're interested, I can ask him. Potential buyers could consider having him send you one, I don't know. The controller draws power from a 5V line (i'm assuming USB, but I'm not sure. It's the cables going up to the right-top side of the mobo in the bigger picture) because the dedicated line only supplies 3.3V. The controller has been designed to take input from two heat-sensors (i circled them in the pic) placed on the heatsinks for the cpu and GPU. It takes a mean average of the temp and increases fan speed based on it. It then forwards impulse to the fan. Well, that's about it. If you have any questions, just ask2 points

-

SUCCESS! Finally something is working. I found this: Alienware Apparently it wont POST without RAM. That's a first for me. The R2 board is now running in my R1. I have an R2 motherboard, 840QM CPU, and R2 heat exchanger since the CPU/SB are in physically different locations on the R2 board. Now I'm wondering if the video worked without my MXM system info BIOS mod. I hate to pull the chips again just to reflash and find out. Anyone know if the R1 LCD works natively on an R2 board? Testing will continue! I'm not sure if the audio board will work or not, there are different part numbers for R1/R2 audio boards. More later.2 points

-

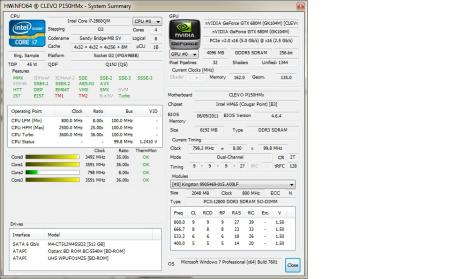

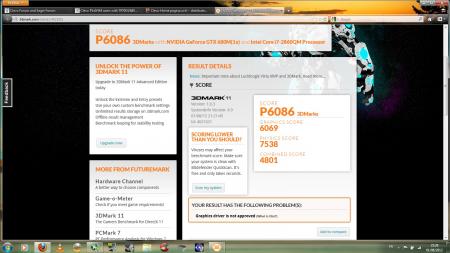

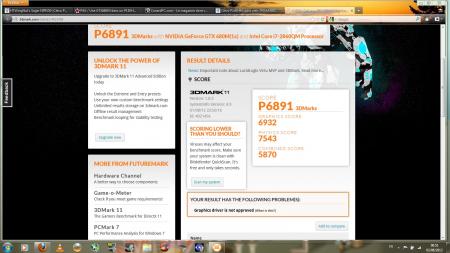

Gtx680M in P150hm 3DM2011 Stock 3DM2011 OC 853/2400 Crysis 2: GTX580M OC (721 core /1700 ram) VS GTX680M OC (853 core /2400 ram) Found this video tutorial to change components on your P1X0HM/EM (Gpu is at 6:16) On a P150HM, only MSI 80.04.33.00.24 and Clevo 80.04.29.00.01 vbios are compatible. Some Benchmarks @1005/2400 http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-3.html#post27637 Just for fun: 4Years old tri-crossfire Desktop Vs P150HM/GTX680M http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-3.html#post28594 Backplate Mod http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-4.html#post31577 http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-4.html#post31527 Crysis 2 Video 1600x900 DX11 ultra + Texture HD @1006/2400 http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-4.html#post31552 Crysis 3 Video 1600x900 DX11 ultra AA2x @1032/2400 http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-9.html#post42374 FarCry 3 Video 1600x900 DX11 ultra AA2x @1019/2400 http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-10.html#post43122 Crysis 3 performance comparison (1600x900 vs 1920x1080) http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-10.html#post43328 3dmark2011 and 3dmark2013 score with 326.80 Beta driver http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-11.html#post65456 2860QM QS - 2960XM OEM performance comparison http://forum.techinferno.com/clevo-sager/1924-p150hm-gtx680m-yes-we-can-11.html#post704751 point

-

ITS ALIVE!!! Just got my computer back from Dell. They replaced the motherboard, video card, and cd drive. Not to bad for the price I paid for them to fix all that. Now it prolly will be awhile before I can con my wife into letting me upgrade the GPU in it : (1 point

-

Nvidia, definitively, it's worth the price difference, imo. I was going for the 7970m for my new laptop, but I found out about those enduro issues some clevo users were having and it really turned me off from AMD :/1 point

-

1 point

-

Very good and in depth instruction. I was experiencing the BSOD and SL issue on my 7870 and the tech support release a beta vbios for me to try out. I never have experience in flashing the BIOS, this guide just helped!1 point

-

Thank you Natalia for your investigation on that topic. Maybe you even can get the release notes for that version1 point

-

hahah sorry i keep thinking everyone has easy access to it so this is how i carry around my pax. someone made me this pouch.1 point

-

Wow, thanks, the breakdown really helped. Considering what you said, I think I'll look into swapping out the 5738G for a better system. You know, it's funny that you mentioned the Dell Inspiron 1545, since my sister has one. Wish me luck in trying to convince her to swap it for mine1 point

-

@AlexandreMPB I had the same problem, what you should do is go into the installation folder for the nVidia driver and manually install nvision. After that, reboot and plug your computer into the TV via HDMI out and then the 3D menu will appear in the nVidia control panel.1 point

-

<a name="implementations"></A>Implementations moved to a dedicated thread: http://forum.techinferno.com/diy-e-gpu-projects/6578-implementations-hub-tb-ec-mpcie.html#post897071 point